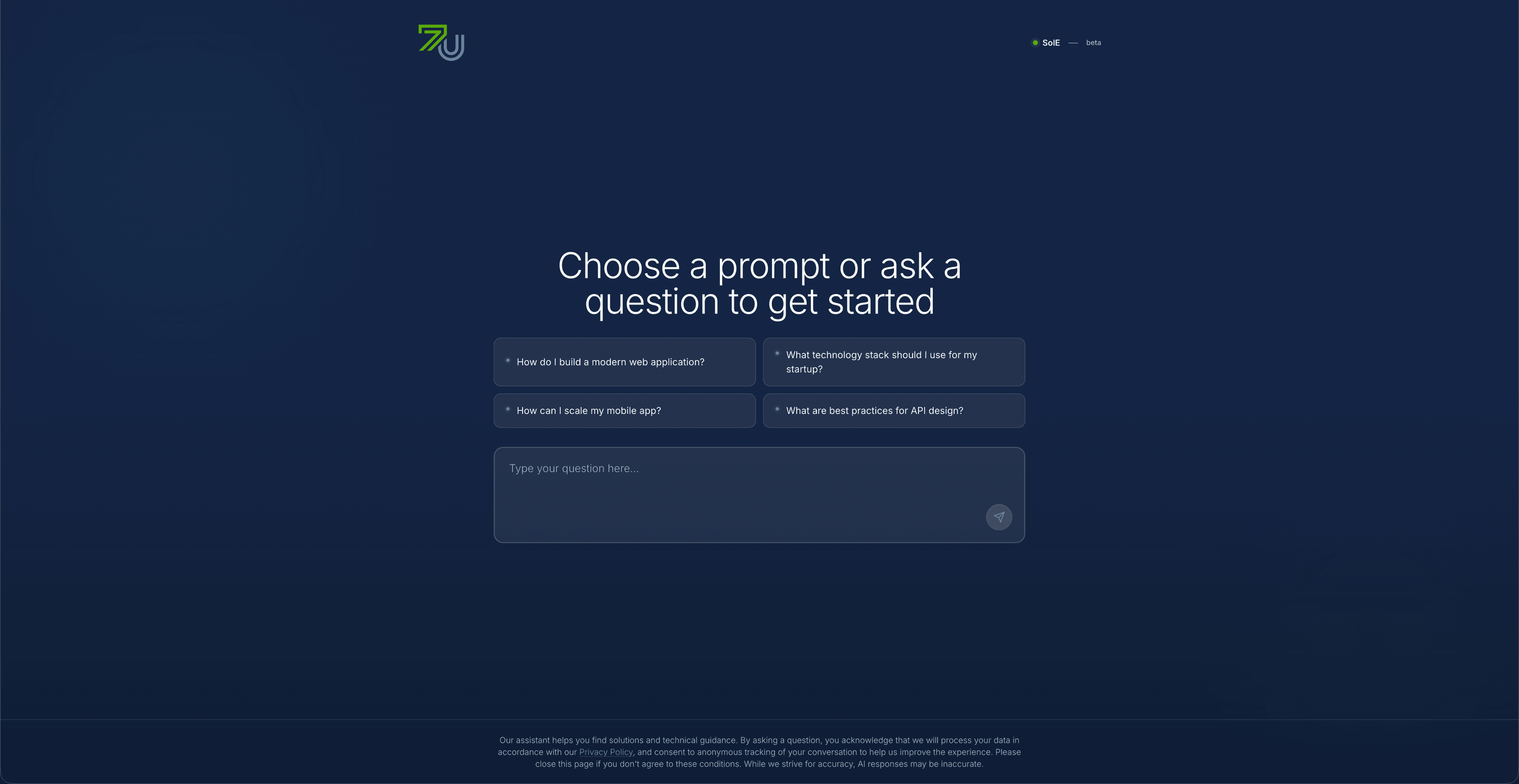

Overview

This case study describes how we turned “AI curiosity” into everyday tools people can actually use. Instead of a flashy demo chatbot, we built assistants that are clear, honest, and safe — and that can be reused for new ideas without starting again from scratch.

Context and constraints

We kept seeing the same story: teams tried AI once, got a mix of impressive and worrying answers, and then stopped. The problem was not just the AI model, but the lack of structure around it. For a founder, a patient, or a parent, it must be obvious what the assistant is for, what information it uses, and where its limits are. So we focused on designing the “rules of the game” first, then fitting the AI into that.

How we approached it

We started by defining the real job of each assistant: who it serves, what it is allowed to answer, and where it must say “I don’t know” or “you should talk to a human”. Then we built a shared system that every assistant could use — one place to manage what information it can see, what topics are off-limits, which languages it supports, and how much it is allowed to spend. On top of that, each assistant got its own “personality” and content, but all of them followed the same simple, safe rules.

What we built

- A shared AI “backbone” that all assistants plug into, so we can manage rules, content, and safety in one place.

- Support for multiple languages so people can ask questions in the way that feels natural to them.

- Simple ways to control what information the assistant uses, so answers come only from trusted, approved sources.

- Safety checks that spot risky or sensitive questions and respond carefully or redirect to a human.

- Clear limits on usage and cost, so teams know how much they are spending and can adjust when needed.

- Built-in patterns for handing over to a human when the assistant should not decide on its own.

- Reusable building blocks, so launching a new assistant is more like “configuring” than “rebuilding”.

Key capabilities

- Each assistant has a clear, human-readable purpose and set of limits.

- People can talk to the assistants in different languages without breaking behavior.

- Safety is treated as a first-class feature, not an afterthought.

- Answers are tied back to real, checked information instead of “best guesses”.

- Teams can see and manage how the assistants are used and how much they cost.

Implementation notes

- We built one strong core system instead of separate one-off chatbots.

- We kept a clean separation between “thinking”, “rules”, and “data” so each can be improved safely.

- The same setup works for sensitive areas like healthcare and education, where stakes are high.

- New assistants can be launched by reusing patterns instead of redesigning everything.

Outcome notes

- Ask SolE, Ask Hally, and Hey Bloom all run on the same safe, reusable foundation.

- Teams feel more comfortable rolling out AI because they understand the rules and limits.

- They avoid short-term “toy” projects and instead build a long-term AI capability.

- The organization can now explore new AI ideas without repeating the early mistakes.

Key outcomes

Technologies